一、先决条件¶

1.Rook部署完成且状态正常

[root@k8s-master01 examples]# kubectl get po -n rook-ceph -owide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

rook-ceph-operator-d68878968-n9spk 1/1 Running 0 13m 172.25.92.90 k8s-master02 <none> <none>

rook-discover-4jsq2 1/1 Running 0 13m 172.17.125.25 k8s-node01 <none> <none>

rook-discover-bns2w 1/1 Running 0 13m 172.25.92.91 k8s-master02 <none> <none>

rook-discover-fw6gf 1/1 Running 0 13m 172.27.14.223 k8s-node02 <none> <none>

rook-discover-kkl6h 1/1 Running 0 13m 172.18.195.23 k8s-master03 <none> <none>

rook-discover-sv6zm 1/1 Running 0 13m 172.25.244.225 k8s-master01 <none> <none>

2.创建Ceph集群时,需要提前在Kubernetes指定节点上添加一块或多块空白硬盘(未格式化的磁盘)

[root@k8s-node02 ~]# lsblk -f

NAME FSTYPE LABEL UUID MOUNTPOINT

sdb

sr0 iso9660 CentOS 7 x86_64 2020-11-02-15-15-23-00

sda

├─sda2 LVM2_member FwFkVh-KQZQ-jPVX-ZFhr-KWXw-apOr-qyTTNl

│ ├─centos-swap swap f2ecae66-0aa8-4cd7-808f-137643a10360

│ └─centos-root xfs 5c040742-70c2-43bf-8923-e11c7b439723 /

└─sda1 xfs 3f980dfc-fa56-4bfe-8f58-cd70fd4fc852 /boot

[root@k8s-node01 ~]# lsblk -f

NAME FSTYPE LABEL UUID MOUNTPOINT

sdb

sr0 iso9660 CentOS 7 x86_64 2020-11-02-15-15-23-00

sda

├─sda2 LVM2_member InPkzD-1116-NcSy-VYZ6-UqAo-Xi3e-TMcXgS

│ ├─centos-swap swap 94b48f88-4f53-42fc-899d-df8f10d32e57

│ └─centos-root xfs eaefe8a0-c12d-47d5-8f73-f41e875d5102 /

└─sda1 xfs efc4bf10-7b41-41cb-9497-b4b0301615ef /boot

[root@k8s-master03 ~]# lsblk -f

NAME FSTYPE LABEL UUID MOUNTPOINT

sdb

sr0 iso9660 CentOS 7 x86_64 2020-11-02-15-15-23-00

sda

├─sda2 LVM2_member 6U2rVH-r90V-vRzK-1VAx-FQsH-XuSg-eRcwLe

│ ├─centos-swap swap d198ed44-10f3-4359-b1b8-f715c39d414e

│ └─centos-root xfs ae303dc0-0f2c-4536-a7af-e6ec9baa622f /

└─sda1 xfs 2b57b5d1-7634-4b16-9c28-2de601accd76 /boot

二、开始部署Ceph集群¶

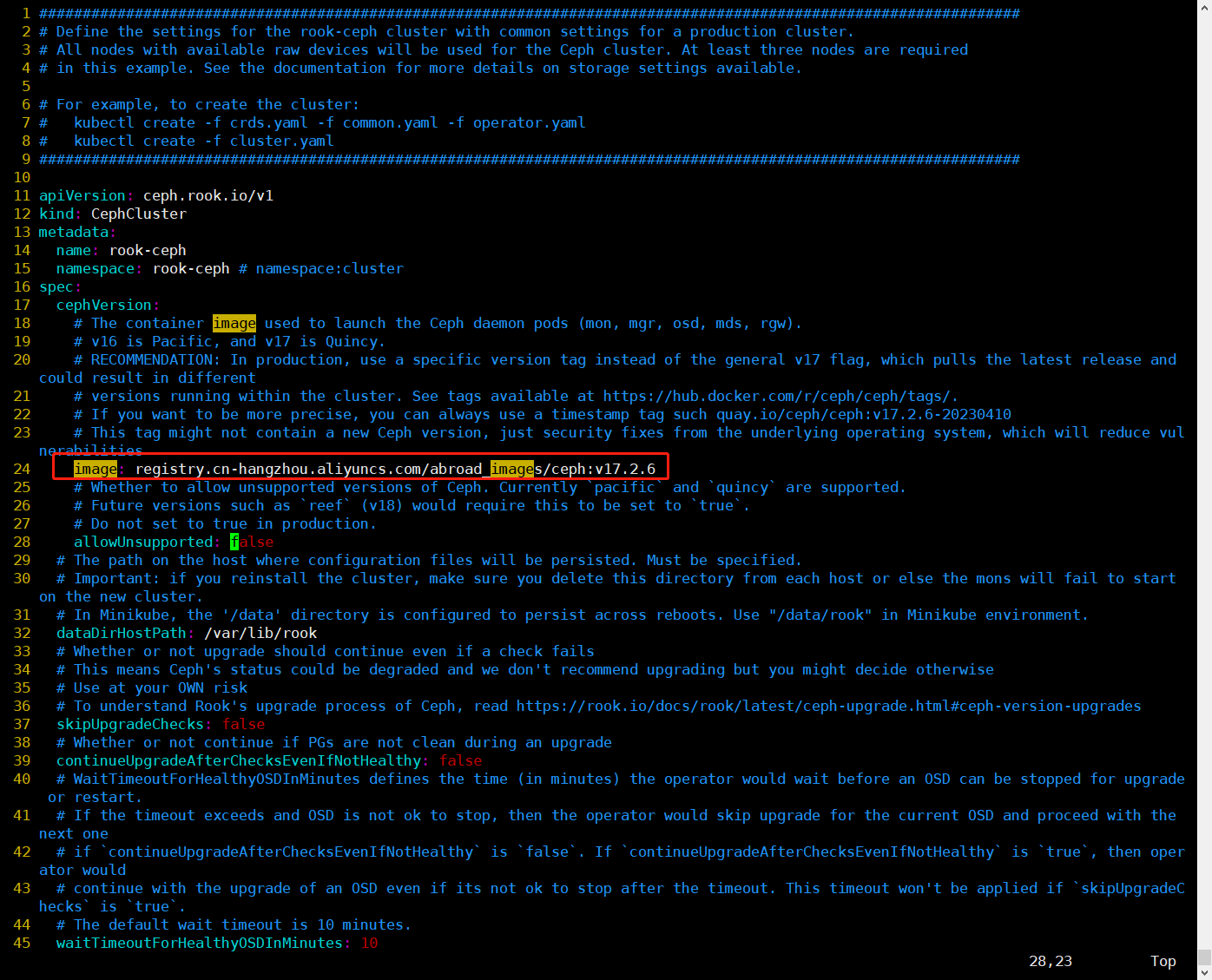

1.修改cluster.yaml文件,替换国外镜像为国内镜像,即将quay.io/ceph/ceph:v17.2.6替换成registry.cn-hangzhou.aliyuncs.com/abroad_images/ceph:v17.2.6

$ vim cluster.yaml

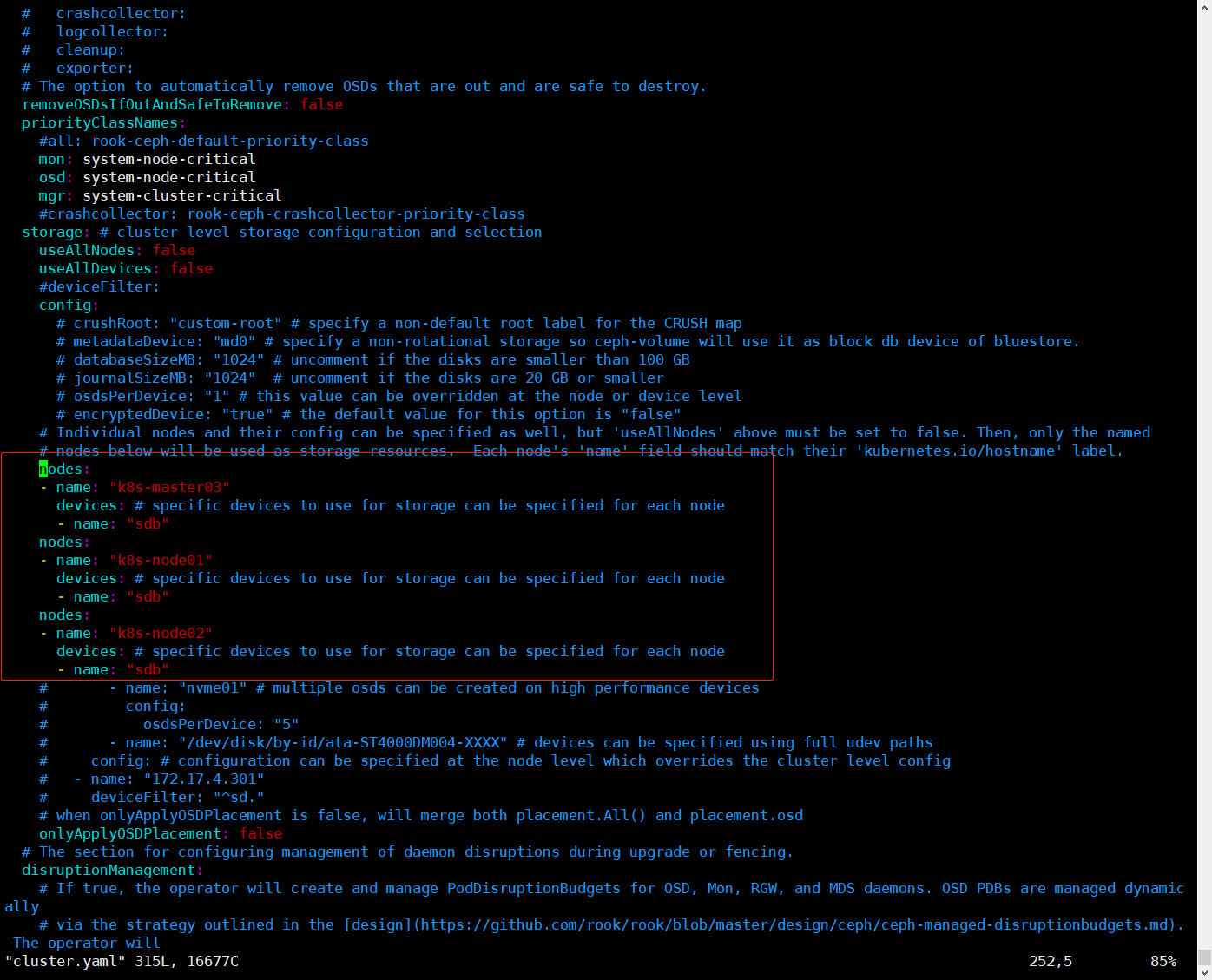

2.修改参数

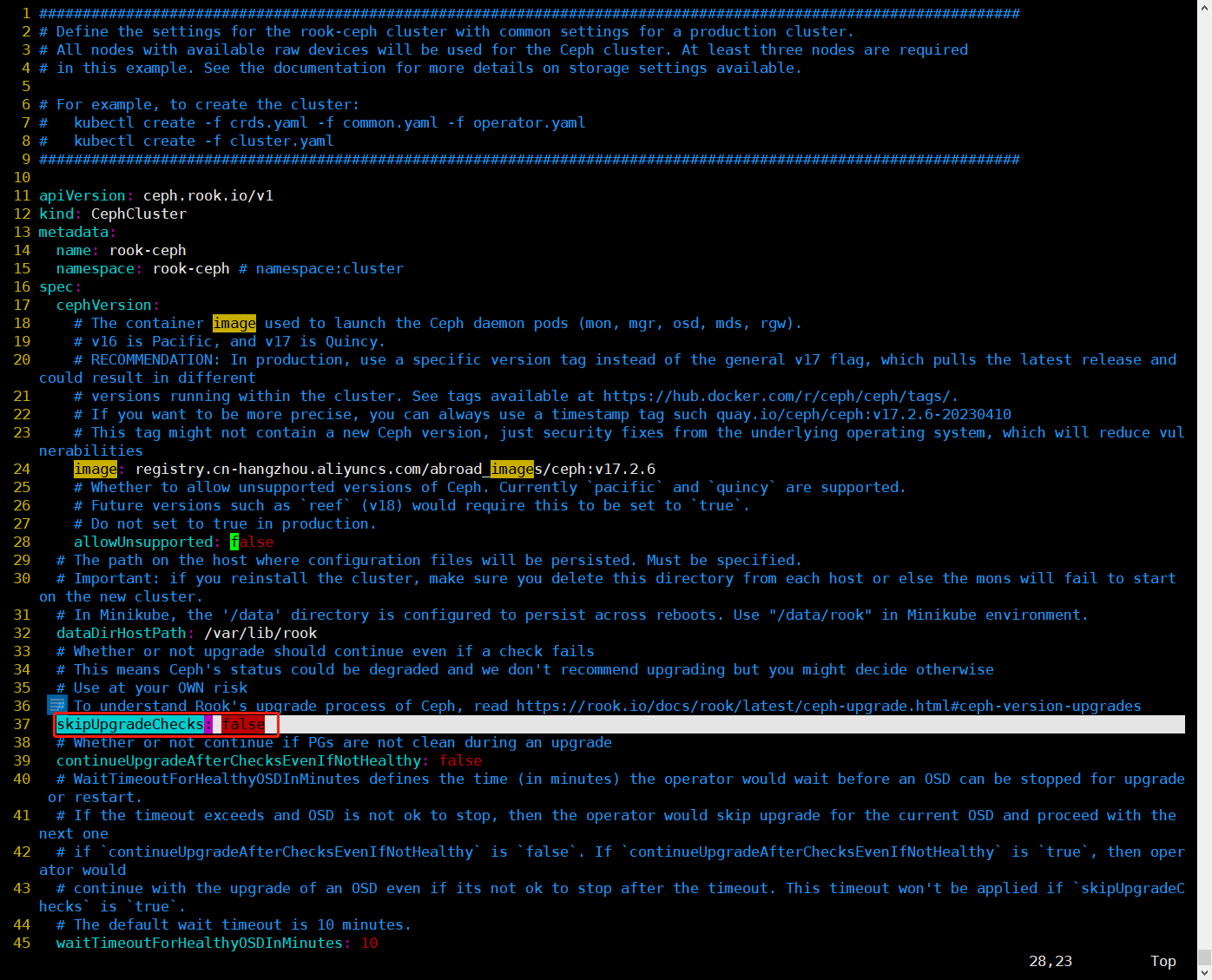

修改skipUpgradeChecks为true,这个功能是跳过检查更新

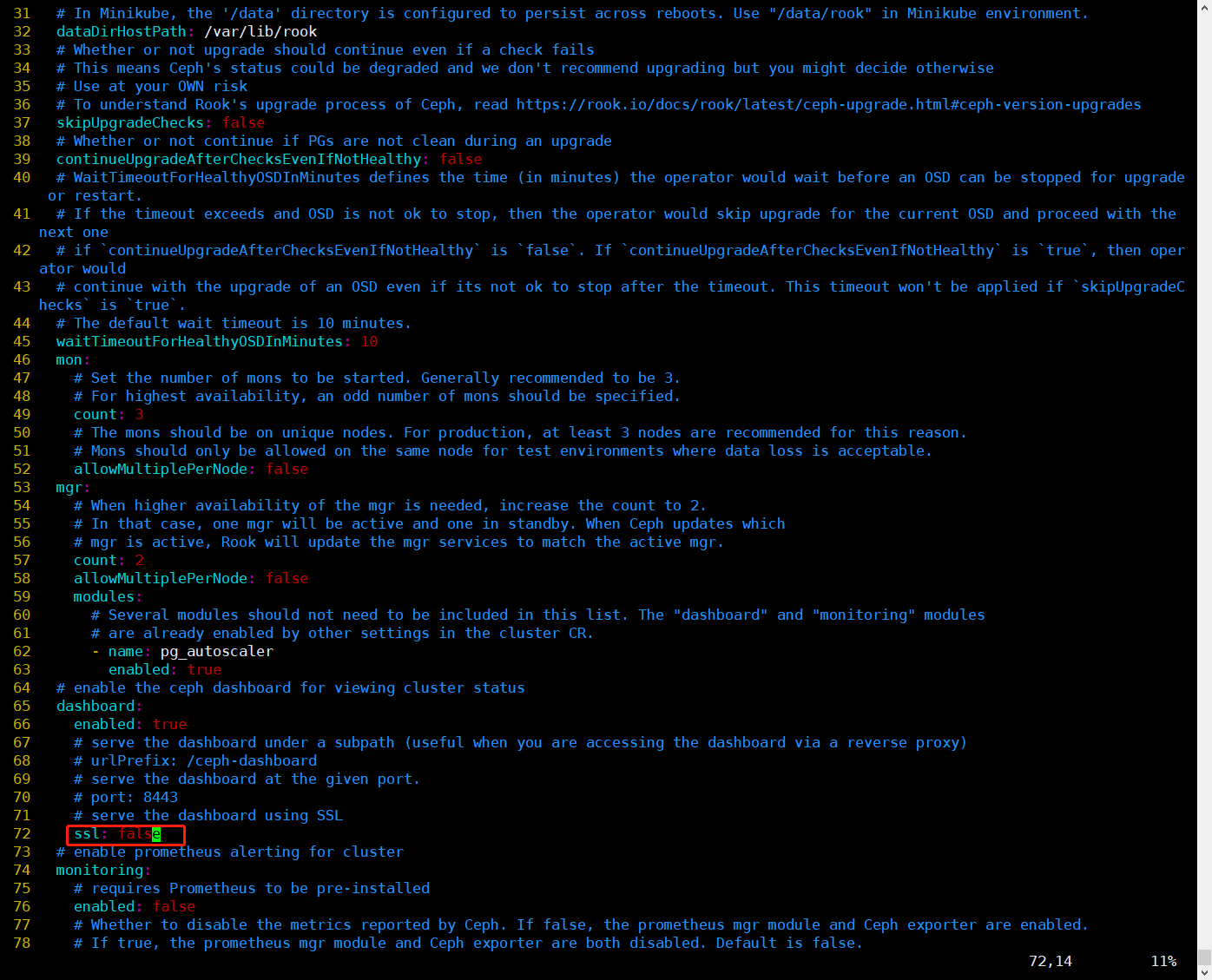

修改ssl为false,这部分是关于 Ceph Dashboard 的 SSL/TLS 配置,为了简单,这里将ssl改为false, Ceph Dashboard 将使用非加密的连接进行通信。

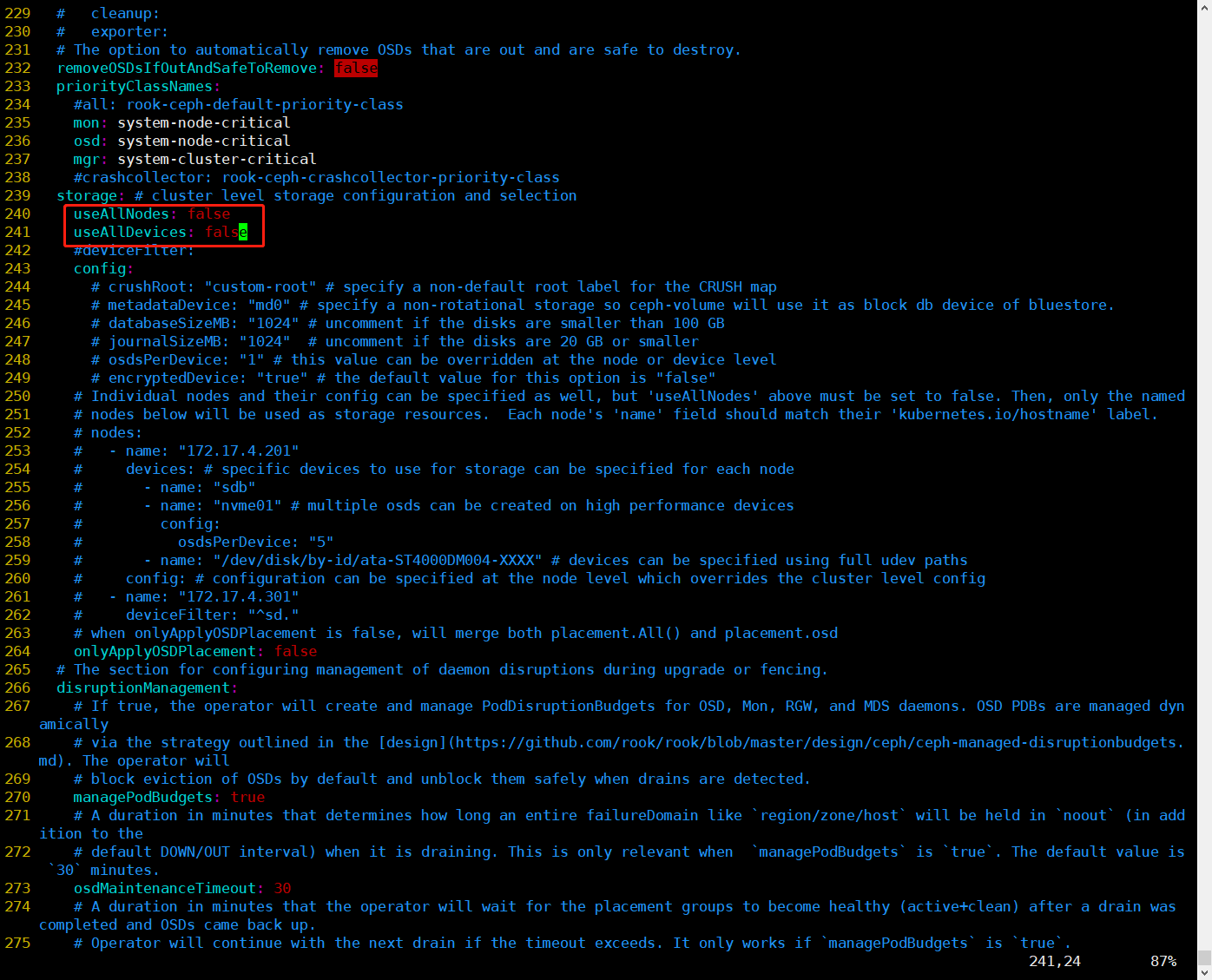

修改useAllNodes和useAllDevices为false,其中useAllNodes选项指示 Ceph 是否应该使用集群中的所有节点作为存储节点。当设置为 true 时,Ceph 将使用所有节点作为存储节点。这意味着每个节点都可以被用作存储设备的托管节点,并参与存储池和数据的管理。当设置为 false 时,只有在 storage 部分中显式定义的节点才会被用作存储节点;useAllDevices选项指示 Ceph 是否应该使用节点上的所有可用设备作为存储设备。当设置为 true 时,Ceph 将使用节点上的所有可用设备作为存储设备。这包括硬盘、固态驱动器 (SSD) 等。当设置为 false 时,只有在 storage 部分中显式定义的设备才会被用作存储设备

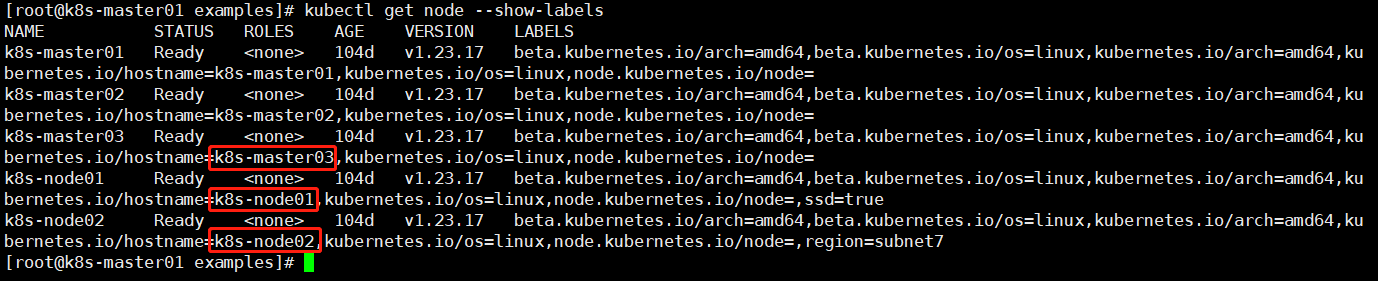

指定节点作为存储资源,这里以k8s-master03、k8s-node01和k8s-node02三个节点作为存储资源

其中devices下面的name指的是新加的空白磁盘的名字,nodes下面的name指的是kubernetes.io/hostname后面的名称,查询如下:

$ kubectl get node --show-labels

3.开始部署Ceph集群

$ cd /root/rook/deploy/example

$ kubectl create -f cluster.yaml

4.等待Pod全部启动完成,观察到有 3 个 OSD,up并且in

[root@k8s-master01 examples]# kubectl -n rook-ceph get po

NAME READY STATUS RESTARTS AGE

csi-cephfsplugin-5dhs9 2/2 Running 0 60m

csi-cephfsplugin-gcq4q 2/2 Running 0 60m

csi-cephfsplugin-jw7cr 2/2 Running 0 60m

csi-cephfsplugin-lmvzz 2/2 Running 0 60m

csi-cephfsplugin-provisioner-ff466fcdb-cwg2v 5/5 Running 0 60m

csi-cephfsplugin-provisioner-ff466fcdb-ltjvf 5/5 Running 0 60m

csi-cephfsplugin-x5588 2/2 Running 0 60m

csi-rbdplugin-28czk 2/2 Running 0 60m

csi-rbdplugin-9m26r 2/2 Running 0 60m

csi-rbdplugin-msq6s 2/2 Running 0 60m

csi-rbdplugin-n5ts6 2/2 Running 0 60m

csi-rbdplugin-provisioner-7475994d8b-xft2l 5/5 Running 0 60m

csi-rbdplugin-provisioner-7475994d8b-znqtz 5/5 Running 0 60m

csi-rbdplugin-rwjw6 2/2 Running 0 60m

rook-ceph-crashcollector-k8s-master02-ff6fcb75-mp9ng 1/1 Running 0 31m

rook-ceph-crashcollector-k8s-master03-79f7556f44-fm29g 1/1 Running 0 22s

rook-ceph-crashcollector-k8s-node01-fff4888cb-d9tdc 1/1 Running 0 31m

rook-ceph-crashcollector-k8s-node02-6dd646b59f-qgwgh 1/1 Running 0 31m

rook-ceph-mgr-a-6bbf68c6d9-rn975 3/3 Running 0 31m

rook-ceph-mgr-b-cb84d4b7b-srjz5 3/3 Running 0 31m

rook-ceph-mon-a-5665457dc9-9dzvl 2/2 Running 0 58m

rook-ceph-mon-b-659dc6846c-2vrbs 2/2 Running 0 37m

rook-ceph-mon-c-596d58f5b-ms82p 2/2 Running 0 32m

rook-ceph-operator-d68878968-lhqwp 1/1 Running 0 88m

rook-ceph-osd-0-8d7f75668-qr2g8 2/2 Running 0 31m

rook-ceph-osd-1-8464f446f5-j74zc 2/2 Running 0 7m6s

rook-ceph-osd-2-7f6f486cdf-p7sb5 2/2 Running 0 22s

rook-ceph-osd-prepare-k8s-master03-9v25v 0/1 Completed 0 7m12s

rook-ceph-osd-prepare-k8s-node01-nsq49 0/1 Completed 0 7m11s

rook-ceph-osd-prepare-k8s-node02-xzxj7 0/1 Completed 0 7m8s

rook-discover-2ctxq 1/1 Running 0 82m

rook-discover-6zkhd 1/1 Running 0 82m

rook-discover-mbjf7 1/1 Running 0 82m

rook-discover-r6p7s 1/1 Running 0 82m

rook-discover-swhrh 1/1 Running 0 82m

5.查看cephcluster状态,观察到正常

[root@k8s-master01 examples]# kubectl get cephcluster -n rook-ceph

NAME DATADIRHOSTPATH MONCOUNT AGE PHASE MESSAGE HEALTH EXTERNAL FSID

rook-ceph /var/lib/rook 3 74m Ready Cluster created successfully HEALTH_OK 1fb7e7e8-4cae-4fc9-9ca1-274e75a14c51

三、安装 ceph snapshot 控制器¶

k8s 1.19 版本以上需要单独安装 snapshot 控制器,才能完成 pvc 的快照功能,所以在此提 前安装下,如果是 1.19 以下版本,不需要单独安装。

1.下载源码文件

(1)在Master01节点上下载源码文件

$ git clone https://gitee.com/jeckjohn/k8s-ha-install.git

(2)在Master01节点上执行以下命令查看分支

$ cd k8s-ha-install

$ git branch -a

* master

remotes/origin/HEAD -> origin/master

remotes/origin/manual-installation-v1.16.x

remotes/origin/manual-installation-v1.17.x

remotes/origin/manual-installation-v1.18.x

remotes/origin/manual-installation-v1.19.x

remotes/origin/manual-installation-v1.20.x

remotes/origin/manual-installation-v1.20.x-csi-hostpath

remotes/origin/manual-installation-v1.21.x

remotes/origin/manual-installation-v1.22.x

remotes/origin/manual-installation-v1.23.x

remotes/origin/manual-installation-v1.24.x

remotes/origin/manual-installation-v1.25.x

remotes/origin/master

(3)在Master01节点上执行以下命令切换分支为remotes/origin/manual-installation-v1.23.x

[root@k8s-master01 k8s-ha-install]# git checkout manual-installation-v1.23.x

2.单独安装 snapshot 控制器

[root@k8s-master01 ~]# cd k8s-ha-install

[root@k8s-master01 k8s-ha-install]# k create -f snapshotter/ -n kube-system

3.验证安装,观察已经成功安装

[root@k8s-master01 k8s-ha-install]# k get po -n kube-system -l app=snapshot-controller

NAME READY STATUS RESTARTS AGE

snapshot-controller-0 1/1 Running 0 108s