机器信息

| 机器编号 | 主机名 | IP |

|---|---|---|

| 1 | ceph1 | 192.168.1.41 |

| 2 | ceph2 | 192.168.1.42 |

| 3 | ceph3 | 192.168.1.43 |

2、准备工作

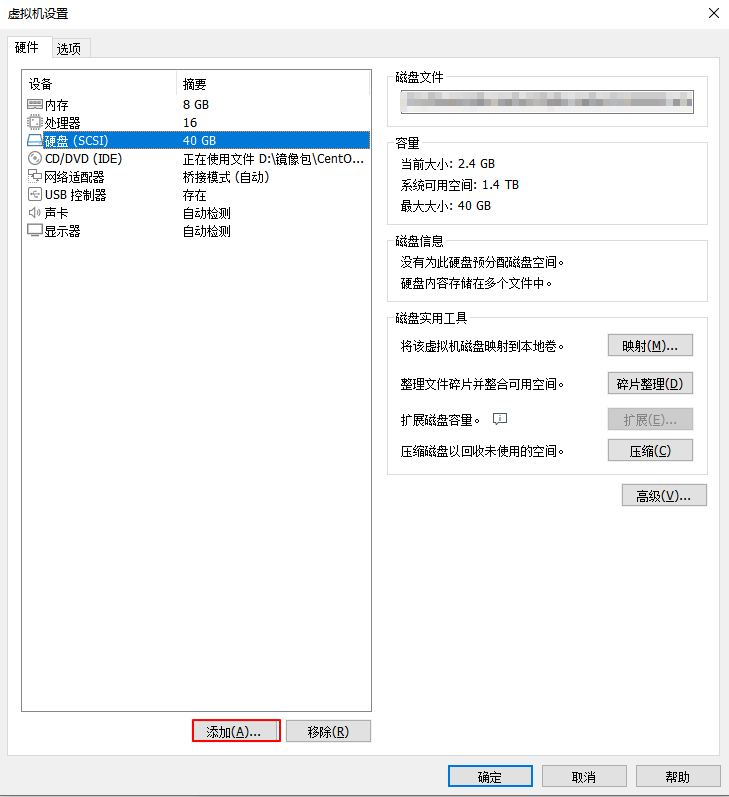

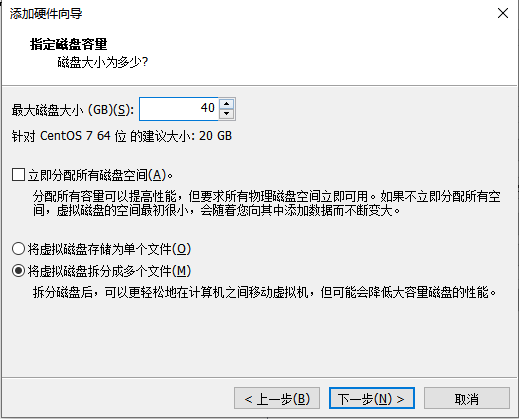

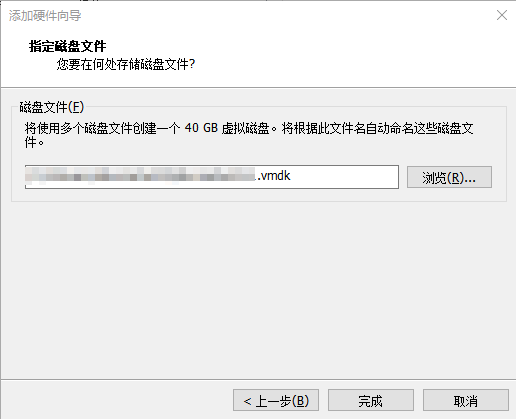

(1)为三个存储节点配备一个容量为40G的裸盘,不能格式化

点击【硬盘】

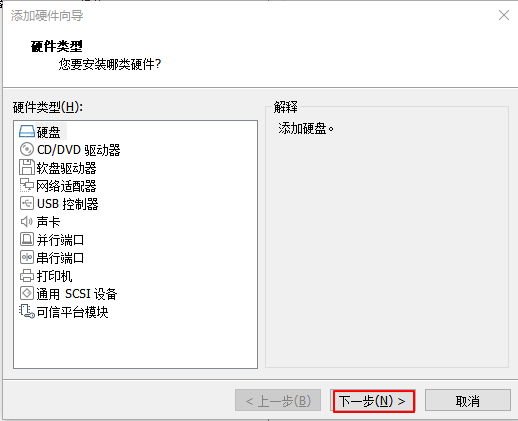

点击【添加】

点击【下一步】

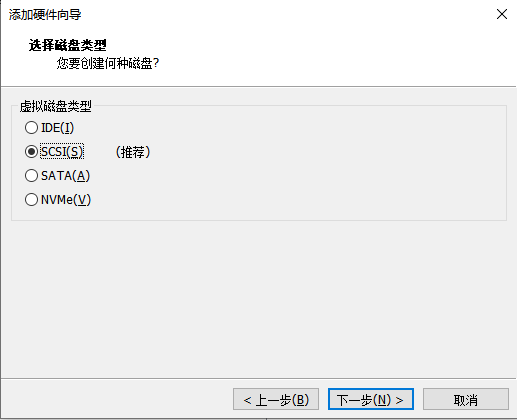

点击【下一步】

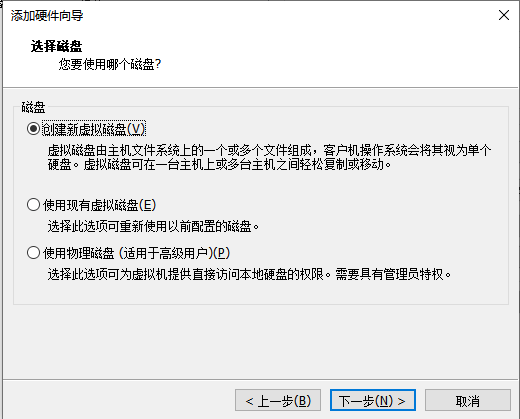

点击【下一步】

设置磁盘大小为40G,点击【下一步】

点击【完成】-【确定】

分区或设备不能使用文件系统进行格式化

$ lsblk -f

NAME FSTYPE LABEL UUID MOUNTPOINT

sdb

sr0 iso9660 CentOS 7 x86_64 2020-11-02-15-15-23-00

sda

├─sda2 LVM2_member 1Kfafb-CLib-CT03-yJsv-ew6f-LcNJ-Inegz9

│ ├─centos-swap swap 6f8695f6-ecb0-4abc-b256-1aacd32adc7e

│ └─centos-root xfs 8ddcb3d5-fdb8-40a2-a3bd-3737d124cc7a /

└─sda1 xfs 40ff112d-b3a9-480e-b7ce-4dc6c7564b6d /boot

(2)检查环境

查看时间是否同步

$ date

如果没有同步,所有机器安装时间同步服务chrony

$ yum install -y chrony

$ systemctl start chronyd && systemctl enable chronyd

查看防火墙、swap分区、selinux是否关闭,没有关闭则执行以下命令进行关闭

$ systemctl disable --now firewalld

$ setenforce 0

$ sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/sysconfig/selinux

$ sed -i 's#SELINUX=enforcing#SELINUX=disabled#g' /etc/selinux/config

$ swapoff -a && sysctl -w vm.swappiness=0

$ sed -ri '/^[^#]*swap/s@^@#@' /etc/fstab

(3)配置hostname

$ hostnamectl set-hostname ceph1

$ hostnamectl set-hostname ceph2

$ hostnamectl set-hostname ceph3

(4)所有机器配置/etc/hosts文件

$ vi /etc/hosts

192.168.1.41 ceph1

192.168.1.42 ceph2

192.168.1.43 ceph3

(5)在ceph1节点上设置yum源

$ vi /etc/yum.repos.d/ceph.repo

[ceph]

name=ceph

baseurl=http://mirrors.aliyun.com/ceph/rpm-pacific/el8/x86_64/

gpgcheck=0

priority =1

[ceph-noarch]

name=cephnoarch

baseurl=http://mirrors.aliyun.com/ceph/rpm-pacific/el8/noarch/

gpgcheck=0

priority =1

[ceph-source]

name=Ceph source packages

baseurl=http://mirrors.aliyun.com/ceph/rpm-pacific/el8/SRPMS

gpgcheck=0

priority=1

(6)所有机器安装docker-ce

安装yum-utils工具

$ yum install -y yum-utils

配置Docker官方的yum仓库,如果做过,可以跳过

$ yum-config-manager \

--add-repo \

https://download.docker.com/linux/centos/docker-ce.repo

安装docker-ce

$ yum install -y docker-ce

启动服务

$ systemctl start docker

$ systemctl enable docker

(7)所有机器安装python3、lvm2

$ yum install -y python3 lvm2

3、开始部署

(1)在ceph1节点上安装cephadm

$ yum install -y cephadm

(2)替换国外镜像为国内镜像

需要替换的国外镜像如下:

DEFAULT_IMAGE = 'quay.io/ceph/ceph:v16'

DEFAULT_IMAGE_IS_MASTER = False

DEFAULT_IMAGE_RELEASE = 'pacific'

DEFAULT_PROMETHEUS_IMAGE = 'quay.io/prometheus/prometheus:v2.33.4'

DEFAULT_NODE_EXPORTER_IMAGE = 'quay.io/prometheus/node-exporter:v1.3.1'

DEFAULT_ALERT_MANAGER_IMAGE = 'quay.io/prometheus/alertmanager:v0.23.0'

DEFAULT_GRAFANA_IMAGE = 'quay.io/ceph/ceph-grafana:8.3.5'

DEFAULT_HAPROXY_IMAGE = 'docker.io/library/haproxy:2.3'

DEFAULT_KEEPALIVED_IMAGE = 'docker.io/arcts/keepalived'

DEFAULT_SNMP_GATEWAY_IMAGE = 'docker.io/maxwo/snmp-notifier:v1.2.1'

DEFAULT_REGISTRY = 'docker.io' # normalize unqualified digests to this

替换后的国内镜像:

$ vi /usr/sbin/cephadm

...

...

DEFAULT_IMAGE = 'registry.cn-hangzhou.aliyuncs.com/abroad_images/ceph:v16'

DEFAULT_IMAGE_IS_MASTER = False

DEFAULT_IMAGE_RELEASE = 'pacific'

DEFAULT_PROMETHEUS_IMAGE = 'registry.cn-hangzhou.aliyuncs.com/abroad_images/prometheus:v2.33.4'

DEFAULT_NODE_EXPORTER_IMAGE = 'registry.cn-hangzhou.aliyuncs.com/abroad_images/node-exporter:v1.3.1'

DEFAULT_ALERT_MANAGER_IMAGE = 'registry.cn-hangzhou.aliyuncs.com/abroad_images/alertmanager:v0.23.0'

DEFAULT_GRAFANA_IMAGE = 'registry.cn-hangzhou.aliyuncs.com/abroad_images/ceph-grafana:8.3.5'

DEFAULT_HAPROXY_IMAGE = 'registry.cn-hangzhou.aliyuncs.com/abroad_images/haproxy:2.3'

DEFAULT_KEEPALIVED_IMAGE = 'registry.cn-hangzhou.aliyuncs.com/abroad_images/keepalived:latest'

DEFAULT_SNMP_GATEWAY_IMAGE = 'registry.cn-hangzhou.aliyuncs.com/abroad_images/snmp-notifier:v1.2.1'

DEFAULT_REGISTRY = 'registry.cn-hangzhou.aliyuncs.com'

(3)在ceph1节点上使用cephadm部署ceph

$ python3.6 /usr/sbin/cephadm bootstrap --mon-ip 192.168.1.41

注意看用户名、密码

Ceph Dashboard is now available at:

URL: https://ceph1:8443/

User: admin

Password: 5zs8krta5w

(4)打开浏览器输入https://192.168.1.41:8443访问dashboard,初始账号:admin;初始密码:5zs8krta5w。登录完成后,需要重新修改密码,这里我设置成admin123

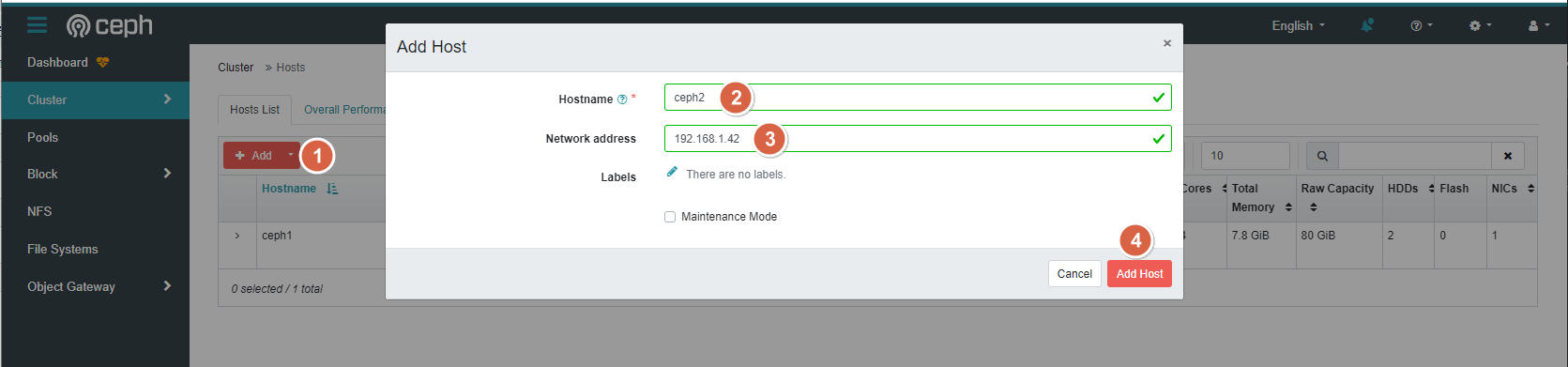

4、添加主机

首先进入ceph shell

[root@ceph1 ~]# python3.6 /usr/sbin/cephadm shell

Inferring fsid 0bd0bee0-7704-11ee-ac45-000c295baf08

Using recent ceph image quay.io/ceph/ceph@sha256:4924a393f5ef4c00e133c13cb8297558f4d1f52731eb16841906519e5de60063

[ceph: root@ceph1 /]#

生成ssh密钥对

[ceph: root@ceph1 /]# ceph cephadm get-pub-key > ~/ceph.pub

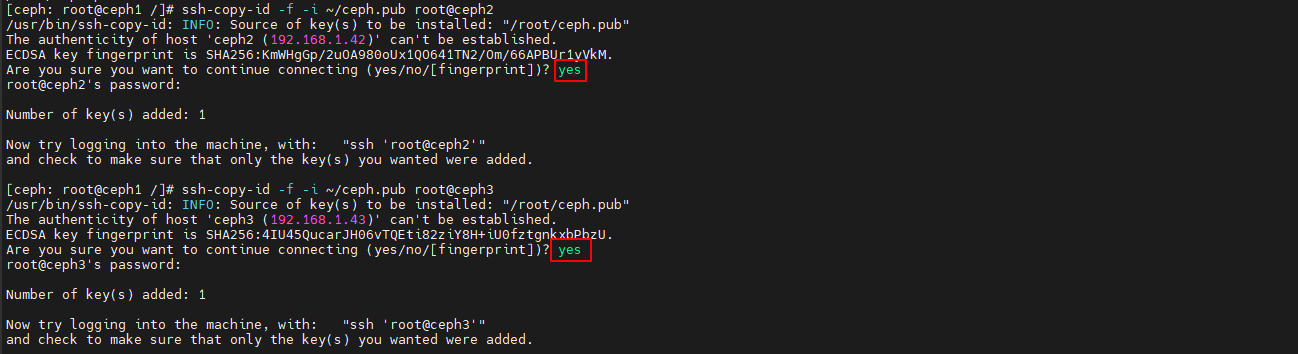

配置到另外两台机器免密登录

[ceph: root@ceph1 /]# ssh-copy-id -f -i ~/ceph.pub root@ceph2

[ceph: root@ceph1 /]# ssh-copy-id -f -i ~/ceph.pub root@ceph3

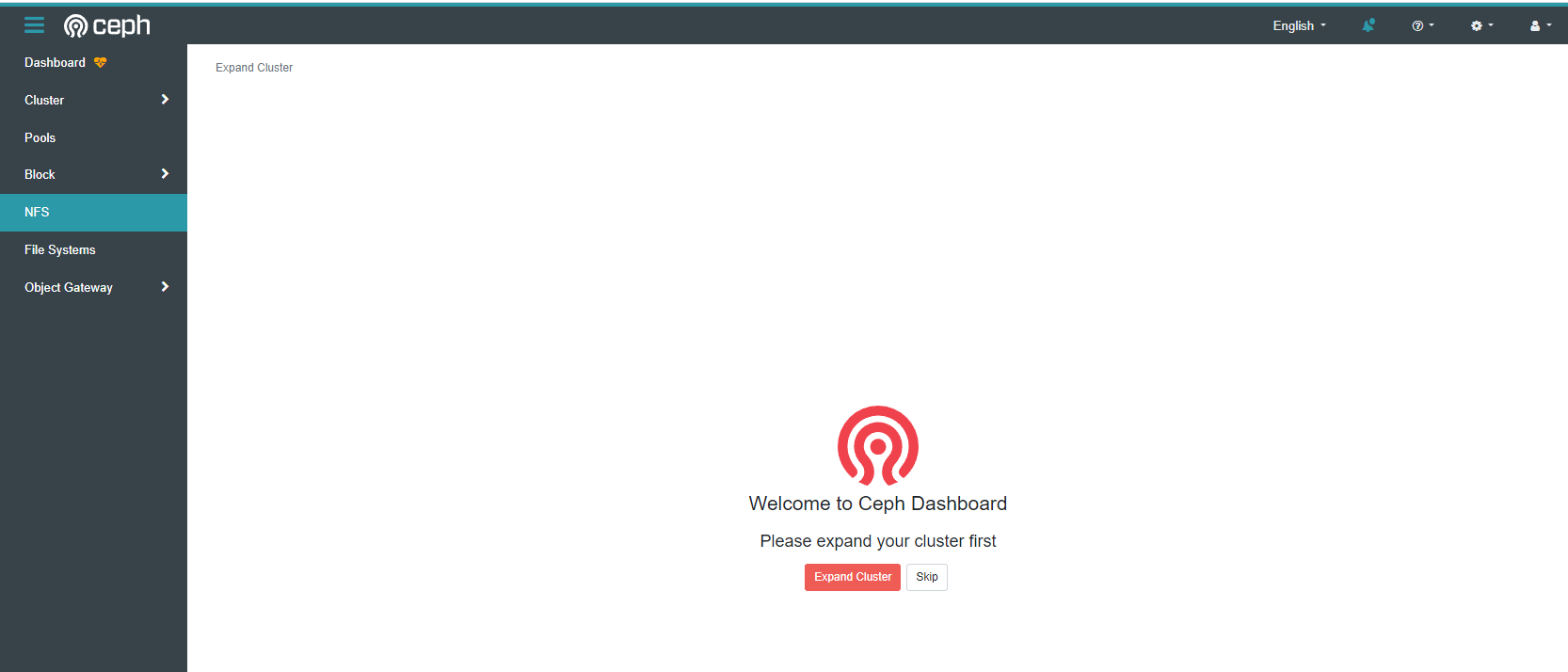

到浏览器里,增加主机192.168.1.42和主机192.168.1.43

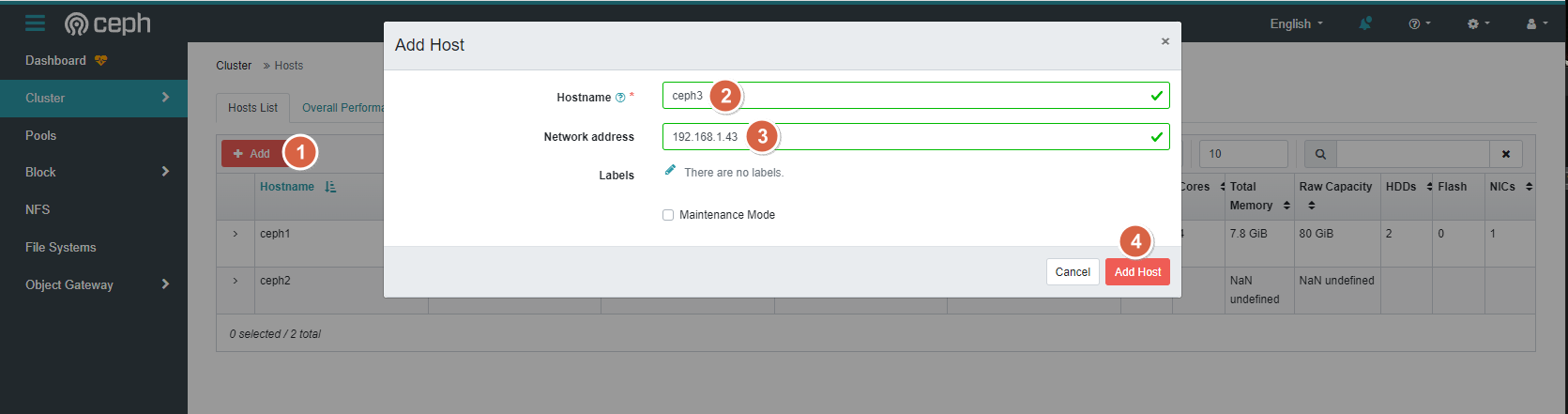

5、创建OSD

首先进入ceph shell

[root@ceph1 ~]# python3.6 /usr/sbin/cephadm shell

Inferring fsid 0bd0bee0-7704-11ee-ac45-000c295baf08

Using recent ceph image quay.io/ceph/ceph@sha256:4924a393f5ef4c00e133c13cb8297558f4d1f52731eb16841906519e5de60063

[ceph: root@ceph1 /]#

假设三台机器上新增的新磁盘为/dev/sdb

[ceph: root@ceph1 /]# ceph orch daemon add osd ceph1:/dev/sdb

[ceph: root@ceph1 /]# ceph orch daemon add osd ceph2:/dev/sdb

[ceph: root@ceph1 /]# ceph orch daemon add osd ceph3:/dev/sdb

查看磁盘列表

[ceph: root@ceph1 /]# ceph orch device ls

HOST PATH TYPE DEVICE ID SIZE AVAILABLE REFRESHED REJECT REASONS

ceph1 /dev/sdb hdd 40.0G 34s ago Insufficient space (<10 extents) on vgs, LVM detected, locked

ceph2 /dev/sdb hdd 40.0G 34s ago Insufficient space (<10 extents) on vgs, LVM detected, locked

ceph3 /dev/sdb hdd 40.0G 34s ago Insufficient space (<10 extents) on vgs, LVM detected, locked

此时dashboard上也可以看到

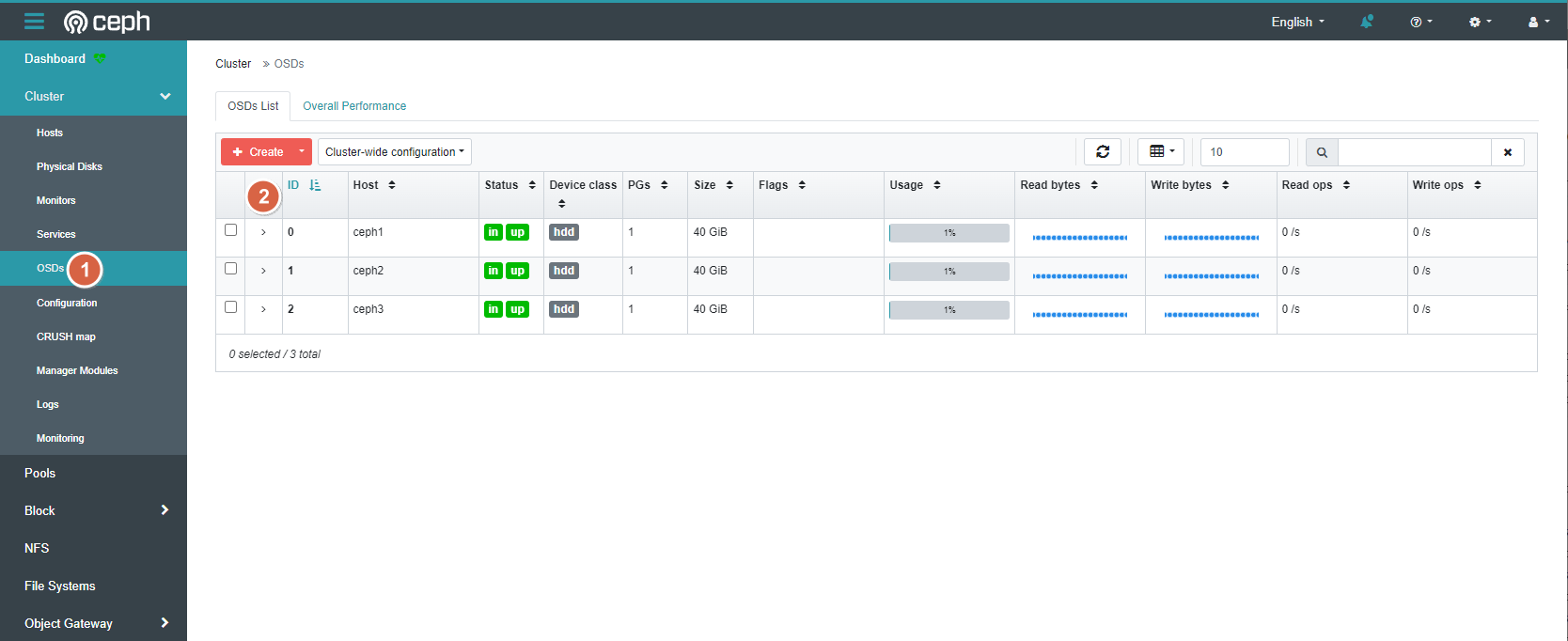

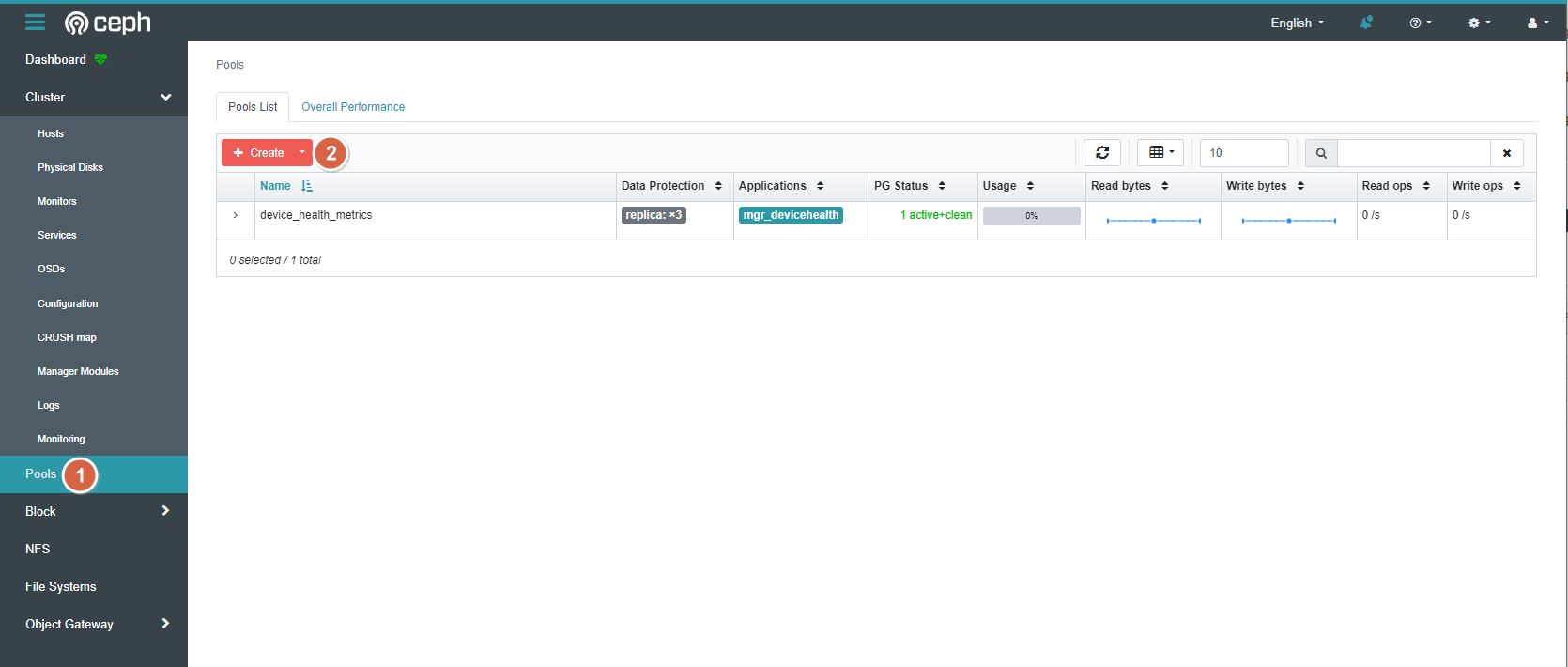

6、创建pool

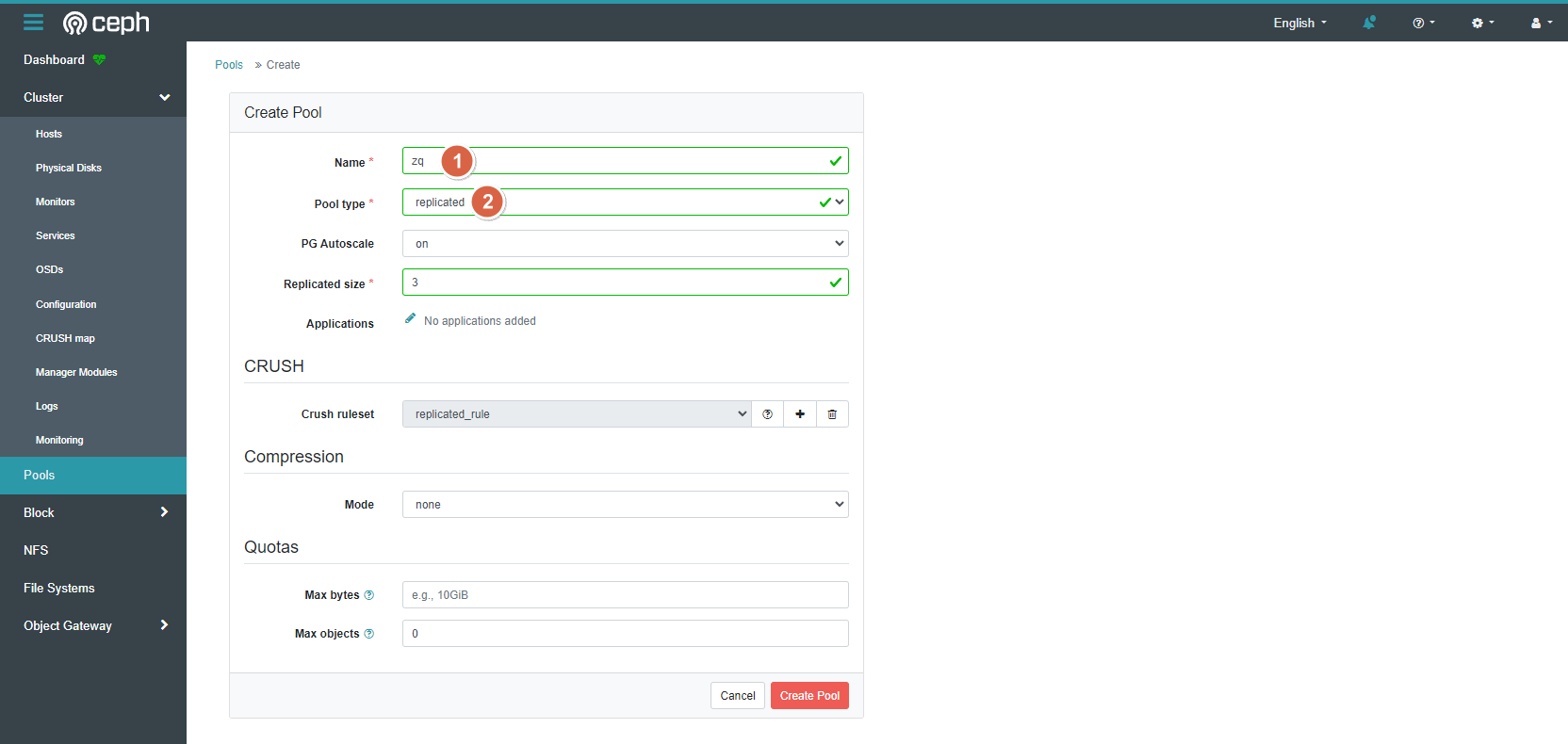

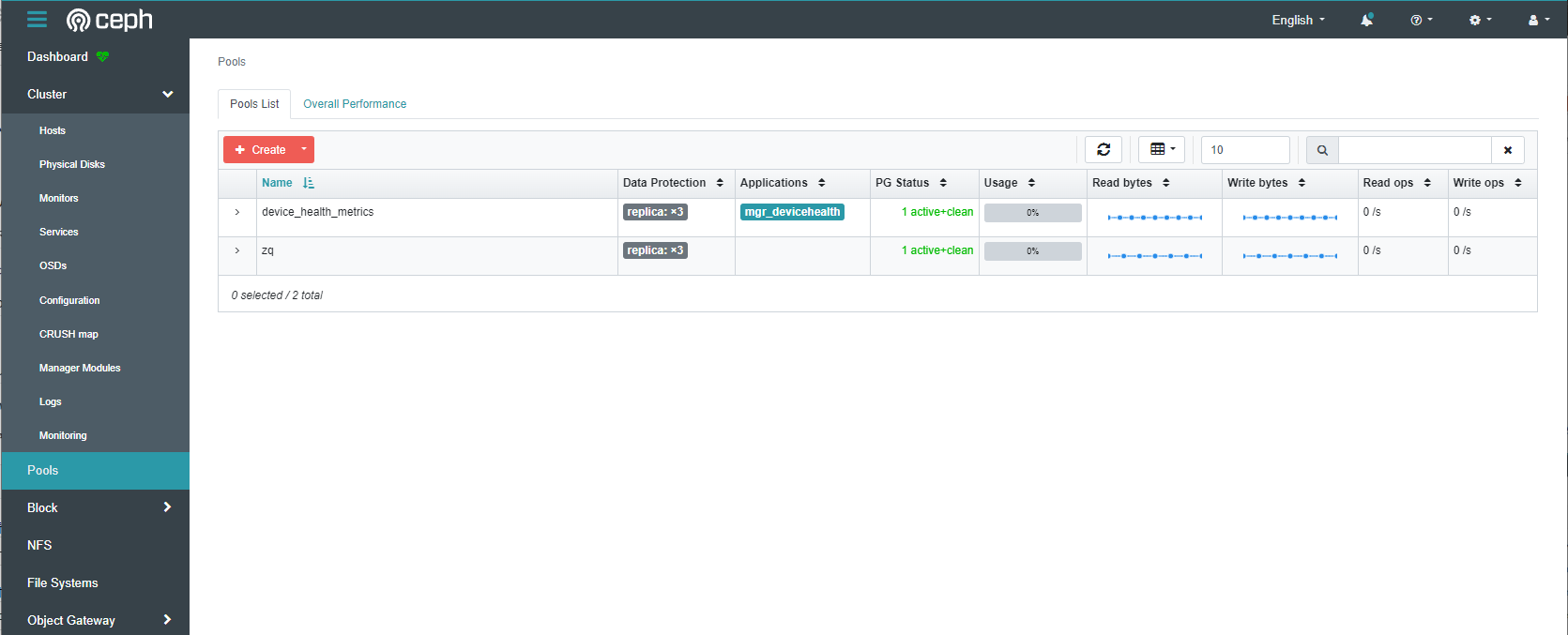

依次点击【Pools】-【Create】

填写【Name】、【Pool type】后,点击【Create Pool】

7、查看集群状态,显示HEALTH_OK即为正常

[ceph: root@ceph1 /]# ceph -s

cluster:

id: 4b5468be-7731-11ee-9c33-000c295baf08

health: HEALTH_OK

services:

mon: 3 daemons, quorum ceph1,ceph3,ceph2 (age 8h)

mgr: ceph1.sxjiyz(active, since 8h), standbys: ceph3.exilji

osd: 3 osds: 3 up (since 7h), 3 in (since 7h)

data:

pools: 2 pools, 33 pgs

objects: 0 objects, 0 B

usage: 871 MiB used, 119 GiB / 120 GiB avail

pgs: 33 active+clean

progress:

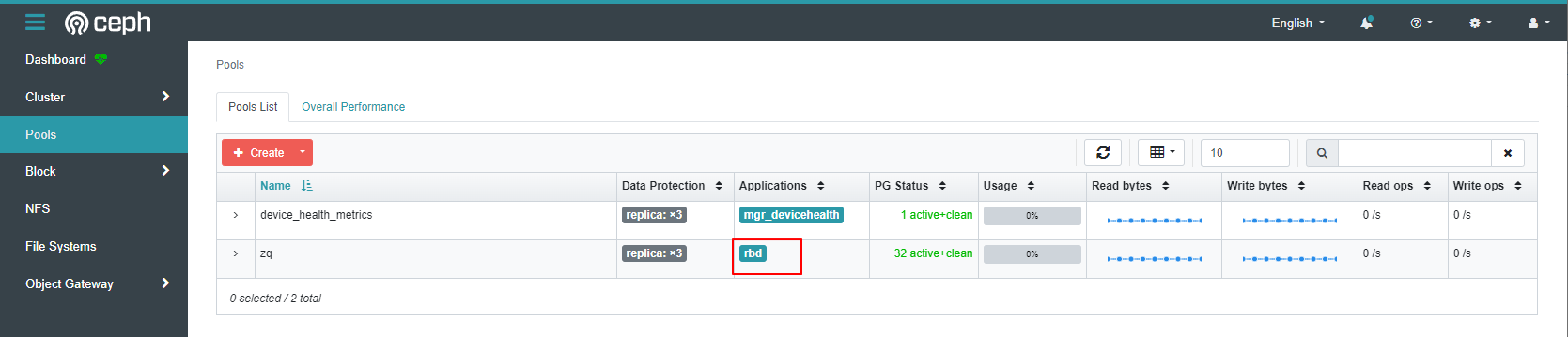

8、针对zq pool启用rbd application

[ceph: root@ceph1 /]# ceph osd pool application enable zq rbd

9、初始化pool

[ceph: root@ceph1 /]# rbd pool init zq