ArgoCD 是一个强大的 GitOps 工具,用于持续部署和管理 Kubernetes 应用程序; 随着越来越多的企业采用 GitOps 方法论,ArgoCD 成为了许多组织的首选工具; 为了确保 ArgoCD 的高效运行并及时发现问题,监控 ArgoCD 的 Metrics 变得至关重要。

一、为什么监控 ArgonCD Metrics?¶

ArgoCD 的 Metrics 提供了有关其内部状态和运行状况的重要信息。通过监控这些指标,可以实现以下目标:

-

性能监控:了解ArgoCD的性能表现,确保其能够有效处理请求。

-

故障检测:快速检测ArgoCD中的潜在问题,如连接问题、同步失败等。

-

容量规划:评估ArgoCD的使用情况,为未来增长做好准备。

-

合规性:确保ArgoCD符合组织的安全和合规政策。

二、配置 Metrics 指标采集¶

官方数据:https://argocd.devops.gold/operator-manual/metrics/

ArgoCD 为每台服务器提供不同的 Prometheus 指标集,针对不同类型的指标我们需要配置不同的采集方式:

2.1 应用程序控制器指标¶

从 argocd-metrics:8082/metrics 端点获取的应用程序指标。

# 新增如下内容

prometheus.io/port: "8082"

prometheus.io/scrape: "true"

# 具体修改

[root@master01 17]# kubectl edit svc argocd-metrics -n argocd

apiVersion: v1

kind: Service

metadata:

annotations:

kubectl.kubernetes.io/last-applied-configuration: |

{"apiVersion":"v1","kind":"Service","metadata":{"annotations":{},"labels":

{"app.kubernetes.io/component":"metrics","app.kubernetes.io/name":"argocd-metrics","app.kubernetes.io/part-of":"argocd"},"name":"argocd-metrics","namespace":"argocd"},"spec":{"ports":

[{"name":"metrics","port":8082,"protocol":"TCP","targetPort":8082}],"selector":

{"app.kubernetes.io/name":"argocd-application-controller"}}}

prometheus.io/port: "8082"

prometheus.io/scrape: "true"

creationTimestamp: "2024-07-31T10:08:26Z"

labels:

app.kubernetes.io/component: metrics

app.kubernetes.io/name: argocd-metrics

app.kubernetes.io/part-of: argocd

name: argocd-metrics

namespace: argocd

2.2 API 服务指标¶

有关 API 服务 API 请求和响应活动的指标(请求总数、响应代码等)。 从 argocd-server-metrics:8083/metrics 端点抓取

# 新增如下内容

prometheus.io/port: "8083"

prometheus.io/scrape: "true"

# 具体修改

[root@master01 17]# kubectl edit svc argocd-server-metrics -n argocd

apiVersion: v1

kind: Service

metadata:

annotations:

kubectl.kubernetes.io/last-applied-configuration: |

{"apiVersion":"v1","kind":"Service","metadata":{"annotations":{},"labels":

{"app.kubernetes.io/component":"server","app.kubernetes.io/name":"argocd-server-metrics","app.kubernetes.io/part-of":"argocd"},"name":"argocd-server-metrics","namespace":"argocd"},"spec":{"ports":

[{"name":"metrics","port":8083,"protocol":"TCP","targetPort":8083}],"selector":

{"app.kubernetes.io/name":"argocd-server"}}}

prometheus.io/port: "8083" # 新增

prometheus.io/scrape: "true" # 新增

creationTimestamp: "2024-07-31T10:08:27Z"

labels:

app.kubernetes.io/component: server

app.kubernetes.io/name: argocd-server-metrics

app.kubernetes.io/part-of: argocd

name: argocd-server-metrics

namespace: argocd

2.3 Repo 服务指标¶

Repo 服务的度量指标。 从 argocd-repo-server:8084/metrics 端点抓取。

# 新增如下内容

prometheus.io/port: "8084"

prometheus.io/scrape: "true"

# 具体修改

[root@master01 17]# kubectl edit svc argocd-repo-server -n argocd

apiVersion: v1

kind: Service

metadata:

annotations:

kubectl.kubernetes.io/last-applied-configuration: |

{"apiVersion":"v1","kind":"Service","metadata":{"annotations":{},"labels":

{"app.kubernetes.io/component":"repo-server","app.kubernetes.io/name":"argocd-repo-server","app.kubernetes.io/part-of":"argocd"},"name":"argocd-repo-server","namespace":"argocd"},"spec":{"ports":

[{"name":"server","port":8081,"protocol":"TCP","targetPort":8081},

{"name":"metrics","port":8084,"protocol":"TCP","targetPort":8084}],"selector":

{"app.kubernetes.io/name":"argocd-repo-server"}}}

prometheus.io/port: "8084" # 新增

prometheus.io/scrape: "true" # 新增

creationTimestamp: "2024-07-31T10:08:27Z"

labels:

app.kubernetes.io/component: repo-server

app.kubernetes.io/name: argocd-repo-server

app.kubernetes.io/part-of: argocd

name: argocd-repo-server

namespace: argocd

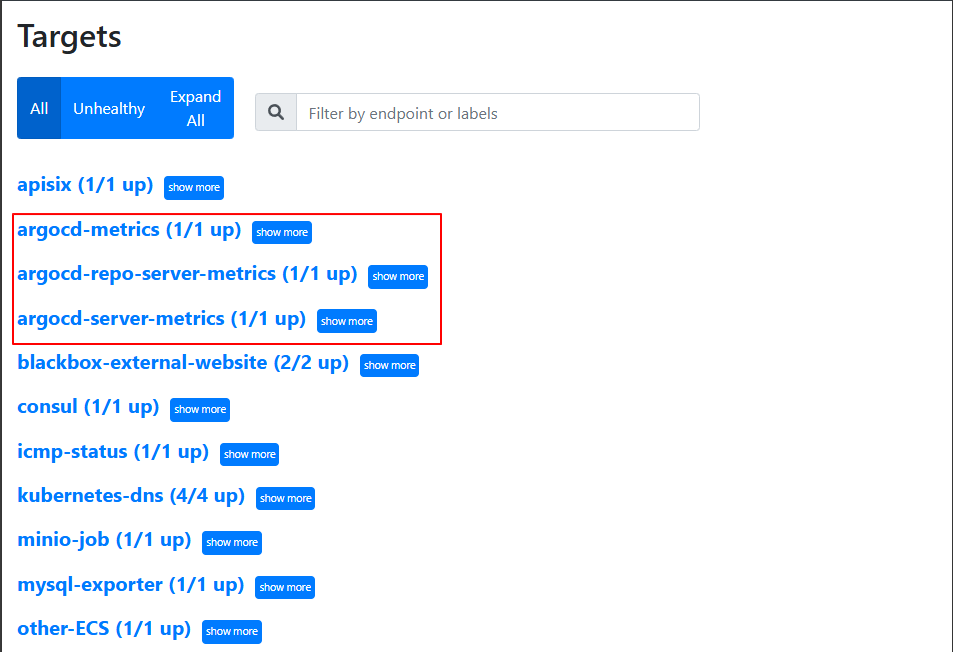

2.4 数据验证¶

修改prometheus静态配置文件

# 添加如下内容

########## Argocd 监控配置 ##########

- job_name: 'argocd-metrics'

static_configs:

- targets: ['argocd-metrics.argocd.svc.cluster.local:8082']

- job_name: 'argocd-server-metrics'

static_configs:

- targets: ['argocd-server-metrics.argocd.svc.cluster.local:8083']

- job_name: 'argocd-repo-server-metrics'

static_configs:

- targets: ['argocd-repo-server.argocd.svc.cluster.local:8084']

# 完整配置文件

[root@master01 7]# vim prometheus-config.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-config

namespace: monitor

data:

prometheus.yml: |

global:

scrape_interval: 15s

evaluation_interval: 15s

external_labels:

cluster: "kubernetes"

############ 添加配置 Aertmanager 服务器地址 ###################

alerting:

alertmanagers:

- static_configs:

- targets: ["alertmanager:9093"]

############ 数据采集job ###################

scrape_configs:

########## Argocd 监控配置 ##########

- job_name: 'argocd-metrics'

static_configs:

- targets: ['argocd-metrics.argocd.svc.cluster.local:8082']

- job_name: 'argocd-server-metrics'

static_configs:

- targets: ['argocd-server-metrics.argocd.svc.cluster.local:8083']

- job_name: 'argocd-repo-server-metrics'

static_configs:

- targets: ['argocd-repo-server.argocd.svc.cluster.local:8084']

########## prometheus 监控配置 ##########

- job_name: prometheus

static_configs:

- targets: ['127.0.0.1:9090']

labels:

instance: prometheus

########## apisix 监控配置 ##########

- job_name: "apisix"

scrape_interval: 15s

metrics_path: "/apisix/prometheus/metrics"

static_configs:

- targets: [metrics.example.com]

########## minio 监控配置 ##########

- job_name: minio-job

bearer_token: <prometheus-bearer-token>

metrics_path: /minio/v2/metrics/cluster

scheme: http

static_configs:

- targets: [s3.example.com]

########## kube-apiserver 监控配置 ##########

- job_name: kube-apiserver

kubernetes_sd_configs:

- role: endpoints

scheme: https

tls_config:

ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name]

action: keep

regex: default;kubernetes

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: service

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

########## kube-controller-manager 监控配置 ##########

- job_name: 'kube-controller-manager'

# 使用 Kubernetes Pod 发现机制

kubernetes_sd_configs:

- role: pod

# 强制使用 HTTPS 协议

scheme: https

# TLS 配置(测试环境跳过验证)

tls_config:

insecure_skip_verify: true

# 使用 ServiceAccount 的 Token 认证

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

# 保留标签为 component=kube-controller-manager 的 Pod

- source_labels: [__meta_kubernetes_pod_label_component]

regex: kube-controller-manager

action: keep

# 重写目标地址为 Pod IP + 10257 端口

- source_labels: [__meta_kubernetes_pod_ip]

regex: (.+)

target_label: __address__

replacement: "${1}:10257"

# 强制使用 HTTPS 协议(冗余但明确)

- source_labels: []

regex: .*

target_label: __scheme__

replacement: https

# 附加元数据标签

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: service

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

########## kube-scheduler 监控配置 ##########

- job_name: 'kube-scheduler'

kubernetes_sd_configs:

- role: pod

scheme: https

tls_config:

insecure_skip_verify: true

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

relabel_configs:

- source_labels: [__meta_kubernetes_pod_label_component]

regex: kube-scheduler

action: keep

- source_labels: [__meta_kubernetes_pod_ip]

regex: (.+)

target_label: __address__

replacement: "${1}:10259"

- source_labels: []

regex: .*

target_label: __scheme__

replacement: https

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: service

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

########## kube-state-metrics 监控配置 ##########

- job_name: kube-state-metrics

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels: [__meta_kubernetes_service_name]

regex: kube-state-metrics

action: keep

- source_labels: [__meta_kubernetes_pod_ip]

regex: (.+)

target_label: __address__

replacement: ${1}:8080

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: service

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

########## coredns 监控配置 ##########

- job_name: coredns

kubernetes_sd_configs:

- role: endpoints

relabel_configs:

- source_labels:

- __meta_kubernetes_service_label_k8s_app

regex: kube-dns

action: keep

- source_labels: [__meta_kubernetes_pod_ip]

regex: (.+)

target_label: __address__

replacement: ${1}:9153

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_service_name]

action: replace

target_label: service

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

########## etcd 监控配置 ##########

- job_name: etcd

kubernetes_sd_configs:

- role: pod

relabel_configs:

- source_labels:

- __meta_kubernetes_pod_label_component

regex: etcd

action: keep

- source_labels: [__meta_kubernetes_pod_ip]

regex: (.+)

target_label: __address__

replacement: ${1}:2381

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

########## kubelet 监控配置 ##########

- job_name: kubelet

metrics_path: /metrics/cadvisor

scheme: https

tls_config:

insecure_skip_verify: true

bearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/token

kubernetes_sd_configs:

- role: node

relabel_configs:

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

########## k8s-node 监控配置 ##########

- job_name: k8s-nodes

kubernetes_sd_configs:

- role: node

relabel_configs:

- source_labels: [__address__]

regex: '(.*):10250'

replacement: '${1}:9100'

target_label: __address__

action: replace

- action: labelmap

regex: __meta_kubernetes_node_label_(.+)

- source_labels: [__meta_kubernetes_endpoints_name]

action: replace

target_label: endpoint

- source_labels: [__meta_kubernetes_pod_name]

action: replace

target_label: pod

- source_labels: [__meta_kubernetes_namespace]

action: replace

target_label: namespace

########## DNS 监控配置 ##########

- job_name: "kubernetes-dns"

metrics_path: /probe # 不是metrics,是probe

params:

module: [dns_tcp] # 使用DNS TCP模块

static_configs:

- targets:

- kube-dns.kube-system:53 #不要省略端口号

- 8.8.4.4:53

- 8.8.8.8:53

- 223.5.5.5:53

relabel_configs:

- source_labels: [__address__]

target_label: __param_target

- source_labels: [__param_target]

target_label: instance

- target_label: __address__

replacement: blackbox-exporter.monitor:9115 # 服务地址,和上面的 Service 定义保持一致

########## ICMP 监控配置 ##########

- job_name: icmp-status

metrics_path: /probe

params:

module: [icmp]

static_configs:

- targets:

- <node-ip>

labels:

group: icmp

relabel_configs:

- source_labels: [__address__]

target_label: __param_target

- source_labels: [__param_target]

target_label: instance

- target_label: __address__

replacement: blackbox-exporter.monitor:9115

########## HTTP 监控配置 ##########

- job_name: 'kubernetes-services'

metrics_path: /probe

params:

module: ## 使用HTTP_GET_2xx与HTTP_GET_3XX模块

- "http_get_2xx"

- "http_get_3xx"

kubernetes_sd_configs: ## 使用Kubernetes动态服务发现,且使用Service类型的发现

- role: service

relabel_configs: ## 设置只监测Kubernetes Service中Annotation里配置了注解prometheus.io/http_probe: true的service

- action: keep

source_labels: [__meta_kubernetes_service_annotation_prometheus_io_http_probe]

regex: "true"

- action: replace

source_labels:

- "__meta_kubernetes_service_name"

- "__meta_kubernetes_namespace"

- "__meta_kubernetes_service_annotation_prometheus_io_http_probe_port"

- "__meta_kubernetes_service_annotation_prometheus_io_http_probe_path"

target_label: __param_target

regex: (.+);(.+);(.+);(.+)

replacement: $1.$2:$3$4

- target_label: __address__

replacement: blackbox-exporter.monitor:9115 ## BlackBox Exporter 的 Service 地址

- source_labels: [__param_target]

target_label: instance

- action: labelmap

regex: __meta_kubernetes_service_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_service_name]

target_label: kubernetes_name

########## TCP 监控配置 ##########

- job_name: "service-tcp-probe"

scrape_interval: 1m

metrics_path: /probe

# 使用blackbox exporter配置文件的tcp_connect的探针

params:

module: [tcp_connect]

kubernetes_sd_configs:

- role: service

relabel_configs:

# 保留prometheus.io/scrape: "true"和prometheus.io/tcp-probe: "true"的service

- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape, __meta_kubernetes_service_annotation_prometheus_io_tcp_probe]

action: keep

regex: true;true

# 将原标签名__meta_kubernetes_service_name改成service_name

- source_labels: [__meta_kubernetes_service_name]

action: replace

regex: (.*)

target_label: service_name

# 将原标签名__meta_kubernetes_service_name改成service_name

- source_labels: [__meta_kubernetes_namespace]

action: replace

regex: (.*)

target_label: namespace

# 将instance改成 `clusterIP:port` 地址

- source_labels: [__meta_kubernetes_service_cluster_ip, __meta_kubernetes_service_annotation_prometheus_io_http_probe_port]

action: replace

regex: (.*);(.*)

target_label: __param_target

replacement: $1:$2

- source_labels: [__param_target]

target_label: instance

# 将__address__的值改成 `blackbox-exporter.monitor:9115`

- target_label: __address__

replacement: blackbox-exporter.monitor:9115

########## Ingress 监控配置 ##########

- job_name: 'blackbox-k8s-ingresses'

scrape_interval: 30s

scrape_timeout: 10s

metrics_path: /probe

params:

module: [http_get_2xx] # 使用定义的http模块

kubernetes_sd_configs:

- role: ingress # ingress 类型的服务发现

relabel_configs:

# 只有ingress的annotation中配置了 prometheus.io/http_probe=true 的才进行发现

- source_labels: [__meta_kubernetes_ingress_annotation_prometheus_io_http_probe]

action: keep

regex: true

- source_labels: [__meta_kubernetes_ingress_scheme,__address__,__meta_kubernetes_ingress_path]

regex: (.+);(.+);(.+)

replacement: ${1}://${2}${3}

target_label: __param_target

- target_label: __address__

replacement: blackbox-exporter.monitor:9115

- source_labels: [__param_target]

target_label: instance

- action: labelmap

regex: __meta_kubernetes_ingress_label_(.+)

- source_labels: [__meta_kubernetes_namespace]

target_label: kubernetes_namespace

- source_labels: [__meta_kubernetes_ingress_name]

target_label: kubernetes_name

########## 外部域名 监控配置 ##########

- job_name: "blackbox-external-website"

scrape_interval: 30s

scrape_timeout: 15s

metrics_path: /probe

params:

module: [http_get_2xx]

static_configs:

- targets:

- https://www.baidu.com # 改为公司对外服务的域名

- https://www.jd.com

relabel_configs:

- source_labels: [__address__]

target_label: __param_target

- source_labels: [__param_target]

target_label: instance

- target_label: __address__

replacement: blackbox-exporter.monitor:9115

########## 云上ECS 监控配置 ##########

- job_name: 'other-ECS'

static_configs:

- targets: ['101.201.68.158:9100']

labels:

hostname: 'test-node-exporter'

########## 进程 监控配置 ##########

- job_name: 'process-exporter'

static_configs:

- targets: ['<node-ip>:9256']

########## Mysql 监控配置 ##########

- job_name: 'mysql-exporter'

static_configs:

- targets: ['<node-ip>:9104']

########## Consul 监控配置 ##########

- job_name: consul

honor_labels: true

metrics_path: /metrics

scheme: http

consul_sd_configs: #基于consul服务发现的配置

- server: <node-ip>:18500 #consul的监听地址

services: [] #匹配consul中所有的service

relabel_configs: #relabel_configs下面都是重写标签相关配置

- source_labels: ['__meta_consul_tags'] #将__meta_consul_tags标签的至赋值给product

target_label: 'servername'

- source_labels: ['__meta_consul_dc'] #将__meta_consul_dc的值赋值给idc

target_label: 'idc'

- source_labels: ['__meta_consul_service']

regex: "consul" #匹配为"consul"的service

action: drop #执行的动作为删除

############ 指定告警规则文件路径位置 ###################

rule_files:

- /etc/prometheus/rules/*.rules

配置文件生效

[root@master01 7]# kaf prometheus-config.yaml

# 热加载

[root@master01 7]# curl -XPOST http://prometheus.example.com/-/reload

登录http://prometheus.example.com/,通过 prometheus web UI 检查是否可以正常采集指标

2.5 针对 Prometheus Operator 方式¶

使用以下 ServiceMonitor 配置清单示例。 添加 ArgoCD 安装所在的名称空间,并将

metadata.labels.release 更改为 Prometheus 选择的标签名称。

1)argocd-metrics

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

name: argocd-metrics

labels:

release: prometheus-operator

spec:

selector:

matchLabels:

app.kubernetes.io/name: argocd-metrics

endpoints:

- port: metrics

2)argocd-server-metrics

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

name: argocd-server-metrics

labels:

release: prometheus-operator

spec:

selector:

matchLabels:

app.kubernetes.io/name: argocd-server-metrics

endpoints:

- port: metrics

3)argocd-repo-server

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:

name: argocd-repo-server-metrics

labels:

release: prometheus-operator

spec:

selector:

matchLabels:

app.kubernetes.io/name: argocd-repo-server

endpoints:

- port: metrics

三、仪表板展示¶

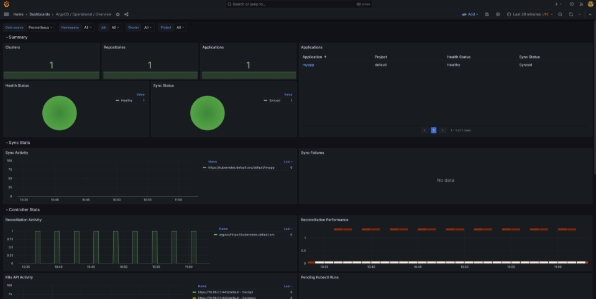

官方大盘,在 Grafana 中导入 Argo CD 的 Dashboard:14584

注意:由于创建后没有更新过,故一些数据指标采集不正常,需要手动调整!

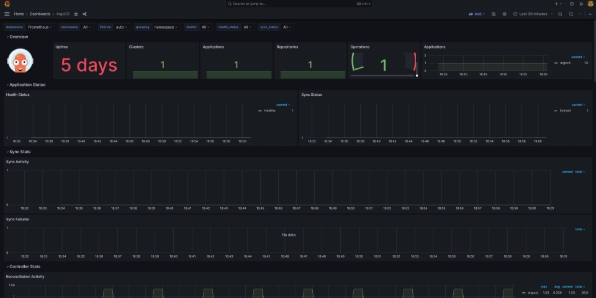

大盘2:19993

数据简单明了,大盘展示较为清晰,目前仍在更新中。